Next copy the log file to the C:/elk folder. The hosts specifies the Logstash server and the port on which Logstash is configured to listen for incoming Beats connections. We are specifying the logs location for the filebeat to read from. Open filebeat.yml and add the following content. # Sending properly parsed log events to elasticsearch One way is to take the log files with Filebeat, send it to Logstash and split the fields and then send the results to Elasticsearch. #If log line contains tab character followed by 'at' then we will tag that entry as stacktrace 8 min read It gives many ways to centralize the logs. # Read input from filebeat by listening to port 5044 on which filebeat will send the data

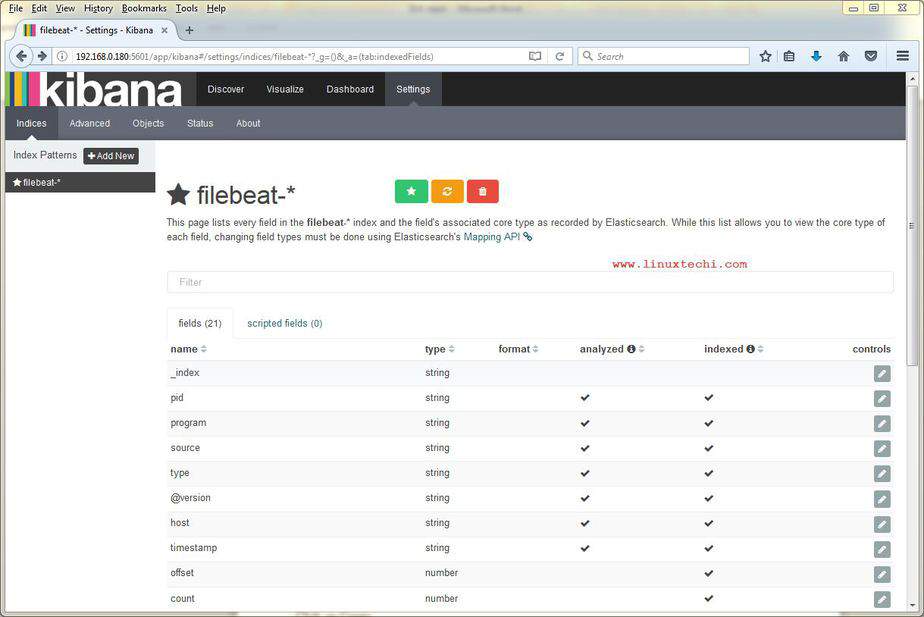

Online Grok Pattern Generator Tool for creating, testing and dubugging grok patterns required for logstash. Here Logstash is configured to listen for incoming Beats connections on port 5044.Īlso on getting some input, Logstash will filter the input and index it to elasticsearch. Similar to how we did in the Spring Boot + ELK tutorial,Ĭreate a configuration file named nf. Logstash itself makes use of grok filter to achieve this. Filebeat installs in the /etc/filbeat folder and, just like the other elasticsearch products, requires some configuration and file modification to get going. This data manipualation of unstructured data to structured is done by Logstash. Suchĭata can then be later used for analysis. We first need to break the data into structured format and then ingest it to elasticsearch. When using the ELK stack we are ingesting the data to elasticsearch, the data is initially unstructured. kibana UI can then be accessed at localhost:5601ĭownload the latest version of logstash from Logstash downloads Run the kibana.bat using the command prompt. Modify the kibana.yml to point to the elasticsearch instance. Step 5: Using the Kibana ‘s3access’ Fileset Dashboard. Elasticsearch can then be accessed at localhost:9200ĭownload the latest version of kibana from Kibana downloads Please see the Start Filebeat documentation for more details. As the dashboards load, Filebeat connects to Elasticsearch to check version information. Before you can use the dashboards, you need to create the index pattern and load the dashboards into Kibana. Run the elasticsearch.bat using the command prompt. Filebeat comes packaged with sample Kibana dashboards that allow you to visualize Filebeat data in Kibana. When the query time exceeds the threshold, the MySQL database writes the query into the file that is specified by slow_query_log_file.This tutorial is explained in the below Youtube Video.ĭownload the latest version of elasticsearch from Elasticsearch downloads Specifies the time threshold used to define a slow query log. Specifies the storage path of slow query logs. Dashboard etc, on Kibana Configure Logstash, FileBeats and Possibly other ELK.

Elasticsearch Service Self-managed Specify the cloud.id of your Elasticsearch Service, and set th to a user who is authorized to set up Filebeat. Role encompasses Elasticsearch including deployment and management of the. To locate this configuration file, see Directory layout. Set the connection information in filebeat.yml. The value off indicates that slow query logs are disabled. Connections to Elasticsearch and Kibana are required to set up Filebeat. My elk is already working, I added metricbeat and can see nice graphics. The value on indicates that slow query logs are enabled. Filebeat + netflow module, there is nothing to visualize on kibana Elastic Stack beats-module, filebeat leostereo (Leostereo) August 29, 2020, 5:33pm 1 Hi guys, im very exited about watching netflow data on elk. Specifies whether to enable slow query logs. The value 0 indicates that the system does not record a query for which no indexes are specified as a slow query log. Filebeat is an open source shipping agent that lets you ship logs from local files to one or more destinations, including Logstash and Elasticsearch. 9 Passive collector integration architecture agents for network connection data, full packet capture, DNS logs. The value 1 indicates that the system records a query for which no indexes are specified as a slow query log. Specifies whether to record a query for which no indexes are specified as a slow query log. Slow_query_log_file=/var/log/mysql/slow-mysql-query.log

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed